Figure 1. Desk view of an augmented reality interface.

Interaction for Computer-Aided Learning

Sissel

Guttormsen Schär, S. Schluep, C. Schierz, H. Krueger

Swiss Federal Institute of Technology, Switzerland

Abstract

Five experiments were performed in order to investigate the effect of the

computer user-interface on learning performance. The theoretical motivation

was to validate the relevance of a cognitive theory about two modes of learning

in a human-computer interaction (HCI) context. In all the experiments factors

estimating the theoretical principles were compared with factors estimating

heuristic measures of user-friendliness. The experiments showed consistently

that the two learning modes can be induced by different user-interfaces, and

that the induced learning mode has an effect on the learning performance. Further,

this research showed that the different set of measures resulted in recommendations

for different user-interfaces. In general the theoretical measures supported

interfaces that improved learning performance, and the heuristic measures supported

interfaces that resulted in shorter task solving times and higher satisfaction.

A combination of both design principles would aim at implementing interfaces

supporting both high learning performance and high satisfaction.

1. Introduction

Today, computing power is no longer a limitation for implementation of learning

tools. The problem has moved from how to implement to what tools to implement

and why. This paper provides a basis for choosing different interface features

for learning tools and demonstrates the impact of theory on interface design.

Current research on computer aided learning (CAL) is very focused on how to

represent the learning content and tends to neglect the impact of the user-interface

in the learning process. Our research shows that an integration of interface

design with the representation of the learning content is important.

Interface design can be theory driven (the particular theory may be task related) or based on human centred design principles. In the first case, theory guides implementation as, for example, in Gibsons perceptual approach; the constructivistic approach (e.g. Duffy and Jonassen, 1992); the activity theory (Engström, 1990); or the information processing approach, a theory of implicit and explicit learning (Reber, Berry and Broadbent). The second case, i.e. the human centred design approach, strives for an interface that is attractive, easy and effective for the users (e.g. Schneiderman, 1987). The desktop metaphor is a typical product of this approach. Other tasks that take advantage of this approach are file manipulation, text editing, or use of public automats (e.g. information kiosks, ticket vending machines, etc.). Although this approach also makes use of knowledge from different cognitive theories, research in this field is often characterised by comparative heuristic studies where particular interface features are tested for efficiency and satisfaction (e.g. Torres-Chazaro et al., 1992; Sengupta & Te'eni,1991).

Complex computer mediated problem solving can take great advantage of interfaces that are designed from considerations that run deeper than simple user friendliness. The human centred design approach is not enough when an interface must support a task that goes beyond desktop handling. In complex tasks (e.g. computer-aided learning, computer-aided design, programming or process control) the user-interface becomes a part of the task solving process.

In this paper we report a series of studies that show that various aspects of the user interface affect the cognitive processes related to learning and problem solving. HCI is a complex domain in which information can be channelled through the human information processing system in subtle ways.

The effect of both theory and user centred design principles were investigated in our experiments. The theory was the key to the cognitive measures and user centred design provided the framework for measures about satisfaction and efficiency. A comparison of the user centred approach with the theoretical approach showed that a consequent application of one of the approaches would result in different suggestions for interaction design. In general, the theoretical approach resulted in interfaces that improved learning performance, but these interfaces were not necessarily most time effective. On the other hand, the application of user-centred design principles resulted in interfaces that benefited from shorter task solving times and higher satisfaction, but this was at the cost of the learning performance. A combination of both design principles would aim at defining interfaces supporting both high performance and high satisfaction.

2. Theory

2.1 Implicit and Explicit Learning

The application of theory in the interface design process enables us

to understand interaction in a wider context and to include task related factors

in the interaction concept. In the series of experiments reported here, we employed

a theory of implicit and explicit learning. The theory was applied to different

ways of communicating information in HCI; i.e. through various interaction tools,

navigation methods and feedback forms. Different HCI factors become a part of

the information process in CAL. The theory of implicit and explicit learning

helps us to understand how this complex information process takes place.

There is increasing evidence that people can learn in two different modes (e.g. Reber, A.S., 1989; Hayes & Broadbent, 1988). An explicit learning mode is characterised by rational, selective and conscious attention. Explicit learning implies observing a manageable number of variables from the environment and keeping count only of the contingencies between those variables. This learning mode is more demanding for the cognitive system. People who learn explicitly seem to do so by developing a mental model of the problem, which can be consulted, manipulated and communicated. It is this type of learning that we normally refer to as problem solving (Reber, 1989; Hayes & Broadbent, 1988). Implicit learning is an unconscious process that yields abstract knowledge. Implicit learning is similar to trial and error learning. It implies storing all the contingencies between all the variables at play. Reber defines implicit learning as "a general, modal-free process, a fundamental operation whereby critical co-variations in the stimulus environment are picked up " (Reber, 1989, p. 135; Reber,1989, p.135).

The success of employing one of these modes depends on characteristics of the problem or the learning task. Every learning task or problem has a structure or rules, which essentially influence how the task should be solved and which solutions should be attempted. The task saliency refers to how obvious the rules or the structure are. The saliency (or complexity) of a problem is, in this research tradition, defined as the essential problem feature accounting for success when learning in one of the two modes (Berry & Broadbent, 1988; Hayes & Broadbent, 1988; Broadbent, FitzGerald & Broadbent, 1986). Saliency is defined by the degree to which the critical features of the stimulus material are obvious to the subjects, and by the amount of information that needs to be considered. These two factors are related: the more features that need to be considered, the less salient is the problem because it is more difficult to keep track of the critical features. The saliency of a problem increases if the number of irrelevant factors in the situation is low, or if the key events are in accordance with general knowledge from outside the problem. One example is given by Reber. They developed an artificial language consisting of strings of letters. Only certain combinations of the letters would follow the rules of the artificial grammar. In the salient condition, the letter strings were organised in columns so that each column represented one underlying stimulus type (grammatical rule). In the non-salient condition the letter strings were shown randomly. After observing the letter strings, the subjects were shown new strings and asked to identify those that were grammatically correct.

The learning modes can be induced relatively easily by external factors. In Reber's research, a learning mode could be induced in the subjects by means of the instructions (Reber, Kassin, Lewis, Cantor, 1980; Reber & Allen, 1978; Reber & Lewis, 1977; Reber, 1976). If subjects were told to search for rules in an artificial language, they would learn in an explicit mode. If the subjects were led to believe that there were no rules governing the stimulus, they would learn in an implicit mode. Instructions to search for rules that underlay less salient stimulus material had a detrimental impact on the subjects' ability to learn about the structure (Reber, 1976). Explicit search for rules produced a strong tendency for subjects to induce or invent rules, which were not accurate representations of the stimulus structure. Subjects who, by instruction, were induced to learn in an implicit mode were able to memorise exemplars of the stimulus structure better.

According to the theory, the ideal combination of problem saliency and learning mode would be to learn in an explicit mode if the problem is salient and to learn in an implicit mode if the problem is not salient. The implication of these findings is that people should be induced to learn in a mode that corresponds to the problem saliency.

Clearly, this theory is highly relevant for HCI design if it can be shown that different user-interfaces can induce the two learning modes. Hence, the motivation for the research presented in this paper was to apply the theory to HCI.

2.2 Information Modes in HCI

Different information modes are determined by the user-interface. In HCI the

user-interface can be defined as "the parts of the system with which the

user comes into contact physically, perceptually or cognitively." Consequently,

the user-interface includes the hardware (e.g. keyboard, mouse, joy-stick, touch

screen), as well as software features (i.e. the graphical and structural organisation

of a program). In the following, we shall analyse how both hardware and software

aspects of the user-interface are integrated in the information processing of

complex learning tasks.

2.2.1 Interaction - Tools and Learning Modes

Various interaction tools can induce different learning modes. Some interaction

tools require the user to interact directly with the objects of interest (e.g.

by directly controlling graphical objects with a mouse). Other interaction tools

demand that the user interact by intermediary actions, e.g. by typing commands.

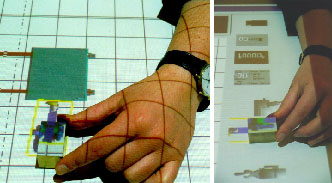

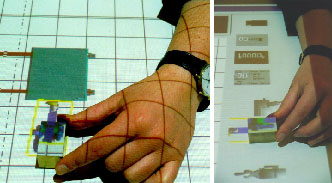

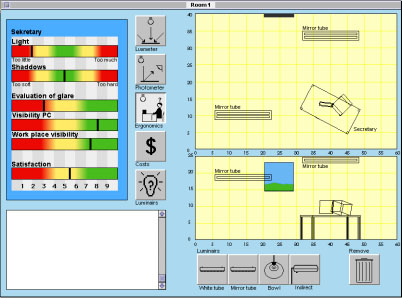

Figure 1 shows an interface in which an augmented reality technique incorporates

real and virtual objects. The users can "grasp" the virtual objects,

which are projected on a normal desktop surface, with a brick.

Figure 1. Desk view of an augmented reality interface.

When the cognitive impact of two given interaction tools differs enough, different learning modes can be induced. Recent research shows that a user interface radically can influence the way people learn by means of a computer. Our research shows that these findings also apply to different interaction tools. An interaction tool, which induces a learning mode, also has consequences for the learning performance (Guttormsen Schär 1996; Guttormsen Schär, 1997b). For example, interaction tools that increase the cognitive load on students when learning (e.g. by demanding the users type commands in order to interact), can induce an explicit learning style. Interaction tools implying little cognitive load on the users, such as interaction by direct manipulation with graphical objects, tend to induce a more implicit, trial and error learning mode. The success of learning a certain task is closely linked to the chosen learning strategy, due to some strategies being more powerful than others (e.g. the success of implicit and explicit learning modes depends on the task saliency). Consequently, the interaction tool can play a major role in successful learning by supporting or inhibiting certain strategies.

2.2.2 Navigation Methods and Learning Modes

CAL applications demand several kinds of user navigation methods. The navigation

strategy is to some extent defined by the characteristics of the learning system.

There are many methods of moving between different states, pages or masks in

a program. The program can apply one or more methods, and this will have an

impact on how the information will be perceived. The navigation strategy also

influences the knowledge acquisition, depending on whether an implicit or an

explicit navigation strategy can be induced by the navigation tool. Navigation

in a free structured system (e.g. hypertext) can support an implicit learning

strategy, because the structure supports spontaneous and unstructured movements

(and also because such systems often are highly unsalient). Browsing is an example

of an implicit navigation strategy. The hypertext/media based structure of Internet

supports navigation by browsing. Browsing is a way of seeking new information;

the information can be unknown, but anticipated. Browsing is a member of a set

of information seeking activities, or strategies, best suited to covering a

large and complex area without going into too much detail. Browsing has associative

activities of exploring, scanning and extending. The purpose of browsing may

be quite implicit or tacit in the understanding of the searcher.

Simulations are similar to hypertext/media systems in that there is no pre-defined structure of the action sequences. Simulations that incorporate spatial movements, e.g. between rooms in a virtual building, must implement navigation methods and tools, which enable users to walk around. However, because many simulations are characterised by a more tacit change of states depending on the user actions, the aspects of navigation are different from browsing. Hence, operating a simulation is neither browsing nor non-browsing. In simulations demonstrating complex relationships, navigation becomes a matter of selecting the right cognitive strategy for the optimal sequence of actions. The knowledge discovery comes gradually by induction, where in hypermedia systems the knowledge may be explicitly presented on one node.

Simulations that incorporate spatial movements, e.g. between rooms in a virtual building, must implement navigation methods and tools, which enable users to walk around.

Non-browsing is more purposeful and focused than browsing. It is more concerned with having a model of what might be possible and exploring options to see which meets chosen criteria. One example is navigation in a structured environment, when people move linearly between pages in a computer-based training program (CBT).) CBT programs often use some kind of a book metaphor, in that the learning material is presented in chapters to be studied in a certain order. This can support an explicit learning strategy because such systems reduce the orientation load and free more attention for the information content. Most computer-based training applications support non-browsing navigation. In such environments the navigation is supported by a structured system. The students are supposed to navigate through the learning material in a structured way; for example, buttons bring the user pages forward or backwards. This kind of navigation was typical for Hypercard© applications. Non-browsing strategies can also be employed in a hypertext/media system by users who apply an explicit search strategy to consider the search activities consciously. This can be achieved by purposeful use of available navigation aids like histories or guided tours. Such navigation aids can serve as cognitive tools, i.e. by helping users to follow a structured search strategy. An example of a history used as a cognitive tool is shown in Figure 4.

2.2.3 Feedback and Learning Modes

Feedback about learning performance is closely related to how the information

is presented. Both the feedback content (i.e. information about the learning

performance) and the feedback form (i.e. how the information is presented) are

relevant. Different forms and content of feedback are shown in Table 1. In the

following, we will describe in more detail the feedback forms employed in our

research.

Visual feedback can be direct or indirect. Direct visual feedback occurs when an action immediately causes a reaction of the program: e.g. a window opens by starting a program, or the scroll bar in a text window scrolls the text. Visual feedback is one of the most powerful effects in simulation-based learning. Interactive simulations can give continuous visual feedback about the effects of different actions in the system. Interaction with graphical interfaces by direct manipulation implies continuous visual feedback about the location and state of objects. This feedback contains indirect information that supports continuous reflections of the ongoing actions. The students interpret the new states of the simulation based on their actions; hence, a series of actions (conscious and purposely or implicit and intuitively) can follow.

| Feedback forms | Feedback content |

Visual

|

Response

|

With increasing processing power, continuous feedback to the user is appealing. The effect of this kind of feedback was investigated in experiment 5, referred to below. An effect of feedback in this case not only would be related to aspects of the feedback itself, but also would indicate the degree to which users are influenced by apparently unimportant interface aspects in general. Two actual examples from commercial software illustrate different forms of subtle feedback. The two examples represent continuous and discontinuous methods of feedback respectively:

From a pedagogical point of view, different feedback content represents an important feature of a CAL system. Verbal forms of feedback communicate direct messages and are, therefore, closely linked to content feedback. Response feedback provides an evaluation of the appropriateness of an action. Approach feedback relates to the chosen task solving strategy. It is realistic for simple tasks with few parameters. It is more complex to design approach feedback for complex tasks. Motivation feedback is intended to increase the effort put into learning. Source of motivation is an individual matter; thus, many factors may have an influence. It is important to analyse carefully what motivates the target group of a learning program before deciding what to implement. Many effects can serve as cognitive feedback depending on the teaching strategy. Cognitive feedback is involved in all interactions with a system in which the students can observe the consequences of their own actions. In particular visual feedback in simulations can have great impact on the learning performance, by either explicitly or implicitly contributing to knowledge acquisition. Simulation promotes much indirect cognitive feedback; in fact, the idea is not based on instruction, but on letting the students implicitly unveil the learning goals.

3. Experiments

We have based our discussion in the theory part of this paper on three principles,

which can stand out as basic hypotheses for the empirical part below:

In five experiments, we applied the theory of implicit and explicit learning to HCI by testing whether different user-interface factors could induce the two learning modes. The following interfaces were tested: interaction tools (in experiments 1, 2 and 3), navigation methods (in experiment 4) and feedback (in experiment 5.). In each of the experiments we compared interfaces that we expected to induce different learning modes. We found that the interaction patterns and task performance varied between the compared interfaces. These results supported the hypothesis that the user-interface can induce a learning mode. Further, we found that the dependent variables reflecting the theoretical (i.e. cognitive performance) and the human centred design approaches (i.e. efficiency) resulted in a support for different user-interfaces. The fact that our results show that the chosen research approach influences the outcome of a study is, of course, not revolutionary. However, our more important finding is that the threat of biased results is not adequately considered in HCI research. Studies in HCI or CAL should include a better control for mono- operational bias (i.e. measuring an effect with too few variables, or variables only reflecting one factor) by including many test factors. Mono- operation bias is a methodological problem which represents a threat to the construct validity because too few operations under-represents the construct in question. One example is when the goal is to examine the effect of a new user-interface on performance. Performance is the construct to be operationalised. Theoretically there are many ways to do this, e.g. by a combination of subjective (e.g. did you think you performed better with interface x than with interface y?) and objective (e.g. how many actions were necessary to reach the goal?) measures. Instead it happens that performance is measured by time to task completion, not considering that other factors like the quality of the result or the effort (e.g. by a measure of activity level) may also be appropriate.

In the sections below, we provide a comprehensive summary of the five experiments. A full description can be found in the reference to each experiment.

3.1 Experiment 1 (Guttormsen Schär, 1996)

| Question: | Which interaction tool is optimal for learning algorithms with relatively low complexity? |

| Hypothesis: | Command input is optimal for learning semi-complex algorithms. |

| Task: | Computer based interactive exploration of Tower of Hanoi |

| Independent variables: | Command based interaction vs. direct manipulation |

| Dependent variables: |

Efficiency: total time. |

| Design: | 2 x interaction tool (n = 12), between group |

| Results: | Main effect of interaction tool Efficiency: Direct manipulation optimal Cognitive performance: Command based interaction optimal |

Instruction: Move the discs and discover the rules.

Implementation: The program stopped when all discs were moved from Start

to Goal. The program only allowed moves complying to the shortest selection

of moves to reach the goal. A bigger disc could not be put on top of a smaller

one. False moves were indicated with a "beep" and the disc was put back to the

original position. A correctly positioned disc could not be removed.

Figure 2. Tower of Hanoi.

![]() An interactive demo of Tower of Hanoi.

An interactive demo of Tower of Hanoi.

Requires Java-enabled browser.

This applet is compatible to Java JDK 1.0.2 and requires Netscape 3.0 or higher.

3.2 Experiment 2 (Guttormsen Schär, 1996)

| Question: | Which interaction tool is optimal for solving simple creative problems? |

| Task: | Computer aided simple drawing tasks. Structured Task: Draw a house, with some restrictions on the procedure. Open task: Draw freely a concrete object of your own choosing. |

| Hypotheses: |

Command input is optimal for solving structured drawing tasks. |

| Independent variables: | Command based interaction vs. direct manipulation |

| Dependent variables: |

Efficiency: total time, thinking time |

| Design: | 2 x 2 mixed (n = 20). 2 x interaction tool = between group, 2 x task = within group (i.e. all subjects solved both tasks) |

| Results: |

No effect of tasks. Effect of interaction tool: |

3.3 Experiment 3 (Guttormsen Schär, Schierz, Stoll, & Krueger,

1997a)

| Question: | Which interaction tool is optimal for learning in a complex computerised simulation based learning environment? |

| Hypotheses: |

Command input is optimal for non-complex learning. |

| Task: |

Learning rules for illumination ergonomics by interacting with a simulation, two complexity levels. |

| Independent variables: | Command based interaction vs. direct manipulation |

| Dependent variables: |

Efficiency: total time |

| Design: | 2 x 2 between group (n = 40): 2 x interaction tool, 2 x task complexity |

| Results: |

No effect of task complexity. Main effect of interaction tool: |

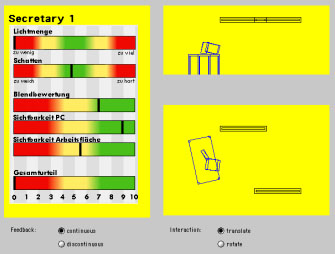

Figure 3. Screen shot of ErgoLight.

3.4 Experiment 4 (Guttormsen Schär, 1997b)

| Question: | Can the two learning modes also be induced by interfaces that vary on perceptual factors only? (In the previous experiments the interfaces varied on motor and perceptual factors.) |

| Hypotheses: |

Presenting a history that provides feedback about the search actions (navigation) is optimal for the task performance. No feedback about the search actions (navigation) is less optimal for task performance. |

| Task: |

Information search by navigation in a hypertext environment. Two different search tasks: search for facts and inductive information search (i.e. searching and combining information from different nodes). |

| Independent variables: | Feedback by an extended history of search actions vs. no feedback by history |

| Dependent variables: |

Efficiency: total time, thinking time |

| Design: | 2 x 2 mixed (n = 30): 2 x feedback = between group, 2 x task = within group |

| Results: |

No effect of task. Main effect of feedback: |

Figure 4. Example of feedback by an extended history.

3.5 Experiment 5 (Guttormsen Schär, 1999a)

| Question: | How subtle can variations in the user-interface be, and still induce two different learning modes? |

| Hypotheses: |

Discontinuous feedback about learning performance results in optimal

learning. |

| Task: |

Learning rules for illumination ergonomics by interacting with a complex simulation. |

| Independent variables: | Continuous feedback about performance vs. discontinuous feedback about performance. |

| Dependent variables: |

Efficiency: total time, thinking time |

| Design: |

2 x 5 mixed design (n = 24): 2 x feedback = between group, 5 x task = within group |

| Results: |

Efficiency: no between group effect |

Figure 5. Screen shot of the simulation: continuous and discontinuous

feedback.

![]() An interactive demo of ErgoLight.

An interactive demo of ErgoLight.

This Java demo-applet is compatible to Java JDK 1.1.7.

Recommended web-browser: Windows 95/98/NT: Netscape Communicator version 4.0.6

or higher. Macintosh: Internet Explorer with the MRJ

2.1 installed.

4. Conclusions

Our research has supported the three basic hypotheses:

Cognitive theory offers a background for explaining learning effects in the HCI context. This perspective leads to a more differentiated way of understanding HCI. A theoretical approach offers a model for the impact of a given learning context. The human centred design approach focuses more on direct cause-effect relationships measured by user preferences or efficiency. In a CAL context, such measures alone are not sufficient because they do not incorporate the quality of the achieved learning performance. User-friendly interface design should, however, not be neglected in CAL environments. In particular, experiment 5 showed that an efficient and highly preferred interaction method (i.e. direct manipulation) combined with discontinuous feedback resulted in the achievement of both goals: high satisfaction and high learning performance.

The estimated usability of an interface depends on the selected measures. A learning situation requires a special selection of measures. We have demonstrated that "mono operation bias" is a serious threat to HCI research related to CAL. Further, as an extension of the dominant research focus on technology and multimedia, current CAL research should also take the influence of the computer user-interface more into consideration.

The user-interface has a direct influence on knowledge acquisition. We have shown that the success of learning a certain task is closely linked to the chosen learning strategy induced by the user-interface. Consequently, because the success of a learning strategy is task dependent, the user-interface can play a major role in the success of learning by supporting or inhibiting certain strategies. Even apparently irrelevant factors of the user-interface can influence learning significantly. Hence, users of software are extremely sensitive to any effect imposed by the user-interface. This strongly suggests that we should evaluate new technology critically and in terms of whether it supports the aims set for a learning tool.

Our research demonstrates that CAL may need other standards for the user-interface design than those given by human centred design principles. We are not recommending the application of less user-friendly interaction methods, but we want to extend the user-friendliness concept with a cognitive factor. Cognitive user-friendliness implies that we should not optimise satisfaction and efficiency at the cost of good learning performance.

A crucial aspect on an abstract level is to isolate factors of user-interfaces contributing to the inducement of different learning modes. The inducement of a learning mode is closely related to the perceived cognitive load. Our research shows that user-interfaces (e.g. command interaction, cognitive feedback by extended menu) that increase the task load resulted in better learning effect. A rational explanation is that these interfaces forced the subjects to learn rationally and consciously in order to reduce the number of interaction steps. This learning approach proved to be most successful for the range of tasks we tested. The timing of feedback also proved to be important. Direct manipulation immediately gives spatial feedback about objects and states of objects, and continuous update of performance feedback reinforces this effect. This induces the less favourable implicit learning mode. On the other hand, command based interfaces result in a discontinuous update of feedback. The same effect was achieved by delaying performance feedback until an action was finished. This resulted in the inducement of the explicit learning mode. Last but not least, the presence of information (such as verbal cognitive feedback) caused the subjects to focus their attention towards the ongoing actions. Further research should aim at identifying other factors of the user-interface responsible for the inducement of different learning modes

5. References

Berry, D.C., & Broadbent, D.E. (1988). Interactive tasks and the implicit-explicit

distinction. British Journal of Psychology, 79, 251-272.

Broadbent, D.E., FitzGerald, P., & Broadbent, M.H.P. (1986). Implicit and explicit knowledge in the control of complex systems. British Journal of Psychology, 77, 33-50.

Canter, D., Rivers, R., & Storrs, G. (1985). Characterising user navigation through complex data structures. Behaviour & Information Technology, 4, 93-102.

Duffy, T.M., & Jonassen, D.H. (1992). Constructivism and the technology of instruction: A conversation. Hillsdale, New Jersey: Lawrence Erlbaum.

Engström, Y. (1990). When is a tool? Multiple meanings of artifacts in human activity, learning, working, imagining (pp. 171-195). Helsinki: Orienta-Konsultit Oy.

Fjeld, M., Bichsel, M., & Rauterberg, M. (1998). BUILD-IT: An intuitive design tool based on direct object manipulation. In I. Wachsmut & M. Frölich (Eds.), Gesture and Sign Language in Human-Computer Interaction, Vol. 1371 (pp. 297-308). Berlin: Springer-Verlag.

Gibson, J.J. (1986). The ecological approach to visual perception. Hillsdale: Lawrence Erlbaum Associates.

Guttormsen Schär, S.G. (1996). The influence of the user-interfaceon solving well- and ill-defined problems. International Journal of Human-Computer Studies, 44, 1-18.

Guttormsen Schär, S.G. (1997b). The history as a cognitive tool for navigation in a hypertext system. M.J. Smith, G. Salvendy, & R.J. Koubek (Eds.), Vol. 21B (pp. 743-746): Elsevier.

Guttormsen Schär, S.G. (1998). Implicit and explicit learning of computerised tasks. The role of the user-interface and task saliency. Doctoral thesis, University of Zürich.

Guttormsen Schär, S.G. (1999a). The effect of continuous vs. discontinuous feedback in a simulation based learning environment. P.o.E.-M. 1999 (Ed.), Ed-Media 1999, Vol. 11 (pp. 190-194), Seattle, USA: B. Collins R. Oliver.

Guttormsen Schär, S.G., Schierz, C., Stoll, F., & Krueger, H. (1997a). The effect of the interface on learning style in a simulation based learning situation. International Journal of Human-Computer Interaction, 9, 235-253.

Hayes, N.A., & Broadbent, D.E. (1988). Two modes of learning for interactive tasks. Cognition, 28, 249-276.

Kunkel, K., Bannert, M., & Fach, P.W. (1995). The influence of design decisions on the usability of direct manipulation user interfaces. Behaviour & Information Technlogy, 14(2), 93-106.

Maddix, F. (1990). Human-computer interaction. Theory and practice. New York: Ellis Horwood.

McAleese, R. (1989). Navigation and browsing in hypertext. In R. McAleese (Ed.), Hypertext theory into practice (pp. 7-43). Oxford: BSP Intellect books.

Reber, A.R., & Lewis, S. (1977). Implicit learning: An analysis of the form and structure of a body of tacit knowledge. Cognition, 5, 333-361.

Reber, A.S. (1976). Implicit learning of Synthetic Languages: The role of instructional set. Journal of Experimental Psychology: Human Learning and Memory, 2(1), 88-94.

Reber, A.S. (1989). Implicit learning and tacit knowledge. Journal of Experimental Psychology: General, 118, 219-235.

Reber, A.S., & Allen, R. (1978). Analogic and abstract strategies in synthetic grammar learning: A functionalist interpretation. Cognition, 6, 189-221.

Reber, A.S., Kassin, S.M., Lewis, S., & Cantor, G. (1980). On the relationship between implicit and explicit modes in the learning of a complex rule structure. Journal of Experimental Psychology: Human Learning and Memory, 6, 492-502.

Sengupta, K., & Te'eni, D. (1991). Direct manipulation and command language interfaces: a comparison of user's mental models. In H.J. Bullinger (Ed.), Human Aspects in Computing: Design and Use for Interactive Systems and Information management. (Advances in Human Factors/Ergonomics, 18A, pp. 429-434). Amsterdam: Elsevier.

Shneiderman, B. (1987). Designing the User Interface: Strategies for Effective Human-Computer Interactions. Reading, MA: Addison-Wesley Publishing Co.

Torres-Chazaro, O., Beaton, R., & Deisenroth, M. (1992). Comparison of command language and direct manipulation interfaces for CNC milling machines. International Journal of Computer Integrated Manufacturing, 5(2), 107-117.

Trudel, C.-I., & Payne, S.J. (1995). Reflection and goal management in exploratory learning. International Journal of Human-Computer Studies, 42, 307-339.

********** End of Document **********

| IMEJ multimedia team member assigned to this paper | Yue-Ling Wong |